Introduction to Remote Sensing

Remote sensing is "the process of detecting and monitoring the physical characteristics of an area by measuring its reflected and emitted radiation at a distance from the targeted area" (USGS 2019).

While remote sensing is commonly used as a synonym for satellite data, the concept of remote sensing can also be applied to aerial photography or lidar from drones. The remote part of remote sensing means that you are gathering data from a distance.

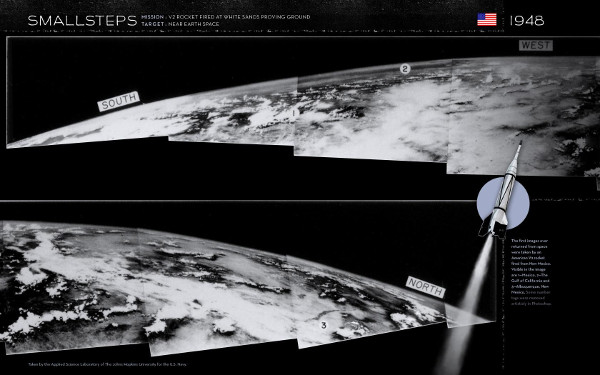

A Brief History of US Satellite Imagery

The first images of the earth from space were captured in 1947 from a camera placed in a sub-orbital German V-2 rocket repurposed by the US after WW II.

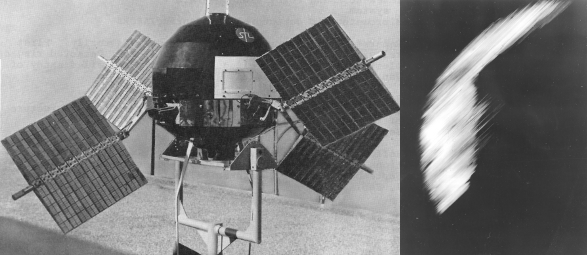

Although the Russians would beat the US into orbit with Sputnik I on 4 October 1957, the United States would be the first to return crude satellite images from a television camera onboard Explorer VI on 14 August, 1959.

The then-secret Corona defense intelligence satellite project would have beaten Explorer VI into space by a few months, but a string of technical failures delayed the first images until CORONA mission XIV on 18 August 1960 (CIA 2015). The satellites used film cameras to capture high resolution images that were then returned to earth in a re-entry capsule that was captured mid-air by a recovery airplane. As befits a cold-war era project the first high-resolution image from space was of the Russian Mys Shmidta Airfield on 18 August 1960.

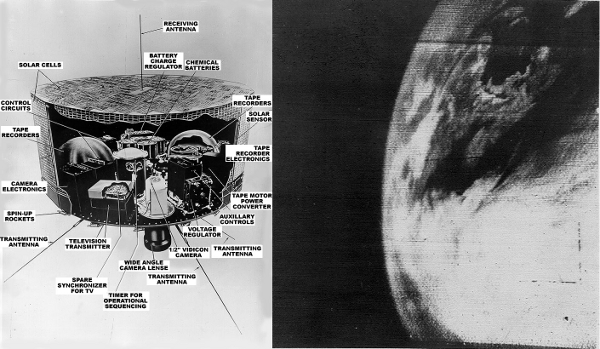

The first truly functional civilian satellite remote sensing system was the Television Infrared Observation Satellite (TIROS) series, the first of which launched on 1 April 1960. This inaugurated the use of satellites for weather observation and forecasting.

Applications of Remote Sensing

Satellite data and imagery has a wide variety of uses in the natural sciences in addition to its military and commercial value.

As an introduction to the wide variety of (perhaps unexpected) uses for remotely sensed data, skim this list of 100 Earth Shattering Remote Sensing Applications and Uses.

Spatial Resolution

Raster vs. Vector Data

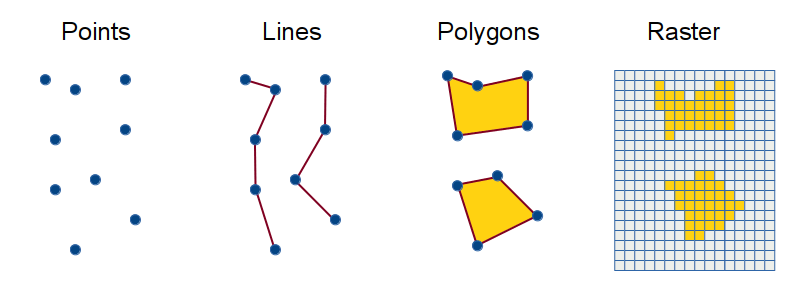

Satellites almost always capture data as raster data.

Raster data represent characteristics of areas on the earth

as regular grids of rectangular pixels.

Raster data is often contrasted in GIS with vector data. Vector data stores locations as discrete geometric objects: points, lines or polygons.

Geographic phenomena can usually be represented using either vectors or rasters, but some types of phenomena are better suited to one or the other.

- Vector data is generally more useful for representing human creations that have clear boundaries, like built structures or political boundaries.

- Raster data is generally more useful for environmental characteristics that do not have clear or stable boundaries, like elevation or vegetation types.

The level of spatial resolution determines the level of detail that can be distinguished in a remotely sensed image. As you zoom in closer to the earth, the amount of information available diminishes and the images appear more fuzzy.

High resolution data is generally desired for more accurate analysis. However, higher resolution data also requires more storage space and processing power, and is harder and more expensive to capture. Deciding on what spatial resolution is appropriate for your work is often a trade-off between how accurate your analysis needs to be and how large your budget can be.

Spatial resolutions for satellite data commonly vary from one kilometer (MODIS), to 30 meters (Landsat), to five centimeters for military intelligence satellites.

Swath

Because there are technical and cost limitations to resolution that defines how much detail a satellite sensor can capture at any one time, satellite data capture follows a narrow path or swath along the ground. The width of this swath varies by different satellite systems based on their purpose.

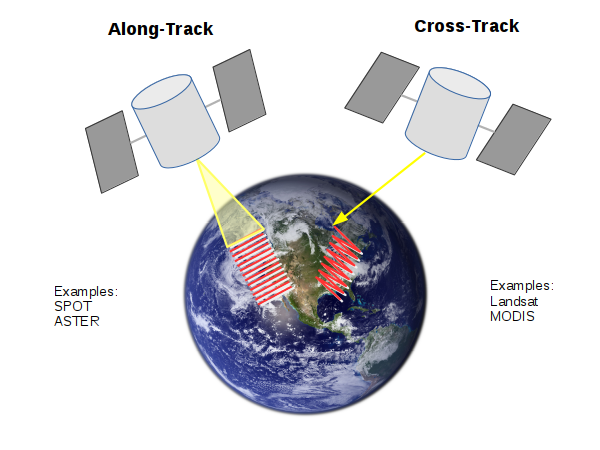

Satellites can scan these swaths in two different ways:

- Along-track scanning captures the width of the swath all at one time, similar to using a pushbroom to sweep a floor. This requires a complex and precise optical system that places technical limits the width of the swath

- Cross-track scanning constantly scans back and forth across the swath, similar to using a whisk broom to sweep a floor side to side. This is commonly done with a rotating mirror that permits a wide swath to be covered by a simpler optical system than an along-track scan. However, this also requires complex correction of distortions introduced by the scanning motion.

Temporal Resolution

The temporal resolution of available satellite data is how frequently data is available for any particular location on the surface of the earth. Some satellite systems return to the same location daily, while others that are designed to observe the entire earth may take days or weeks to return to the same location.

Temporal resolution is determined by the orbit of a satellite, which is the path that the satellite follows as it flies around the earth. The orbit is designed so the the centrifugal force of the circling of the satellite around the planet counterbalances the pull of gravity to keep the satellite aloft.

Orbits can have a number of different characteristics, which involve different types of movement relative to the earth:

- Altitude: How high the orbit is above the surface of the earth.

- Low Earth Orbit is used by the International Space Station.

- Medium Earth Orbit is used by the GPS constellation of satellites (12,552 mile altitude) and the other GNSS satellite systems

- Inclination: The angle of the orbit relative to the equator. Many remote sensing satellites use near-polar orbits that circle nearly over the poles (inclination of almost 90°) permitting systematic coverage of the entire surface of the earth

- Eccentricity: Whether the orbit is circular or forms an ellipse

- SynchronicityThe timing of the orbit relative to the rotation

of the earth or the position of the sun.

- With a geostationary orbit in a band 22,236 miles above the equator, the speed needed to orbit is the same as the rotation of the earth, so the satellite effectively stays in the same place in the sky and the satellite can constantly observe or communicate with a fixed region of the earth. Geostationary orbits are commonly used for communications satellites that relay signals between ground stations on the earth.

- With a sun-synchronous near-polar orbit, half the orbit follows the motion of the sun and the satellite can systematically cover land as it is lit by the sun

While temporal resolution of captured data is determined by the orbit, the temporal resolution of usable data can be affected by cloud cover. If an area is obscured by clouds when the satellite passes over, that data may be unusable and no data can be captured for that area until the satellite passes again when there are no clouds or fewer clouds. Some sources like MODIS compensate for this by combining clear data from multiple passes. While this reduces the temporal resolution, it improves the availability of data.

Spectral Resolution

Remote sensing takes advantage of the emission and reflection of electromagnetic radiation by objects on the surface of the earth to capture what is where on the surface of the earth. The spectral resolution of remotely sensed data is the type of electromagnetic radiation represented in the data.

Electromagnetic Radiation

Objects reflect, absorb, and emit energy in a unique way, and at all times. Electromagnetic radiation originates from the vibration of electrons, atoms, and molecules, and is emitted in waves that are able to transmit energy from one place to another.

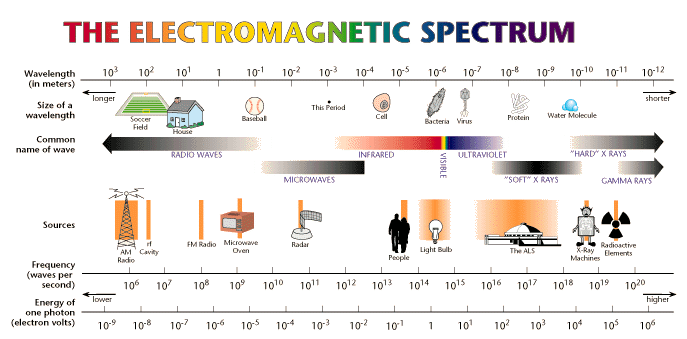

Different types of electromagnetic radiation are distinguished by the speed of their vibration.

- As these waves travel through space at 300,000 kilometers per second (186,000 miles/sec), the distance between the vibrating pulses is called the wavelength and is usually measured in meters or nanometers (one billionth of a meter).

- The speed of the vibration is called frequency and is usually measured in Hertz (1 Hz = one vibration per second).

- Frequency and wavelength are inversely related, and a specific type of electromagnetic radiation can be specified with frequency or wavelength.

The higher the temperature of an object, the faster its electrons vibrate and the shorter its peak wavelength of emitted radiation. Conversely, the lower the temperature of an object, the slower its electrons vibrate, and the longer its peak wavelength of emitted radiation.

The fundamental unit of electromagnetic phenomena is the photon, the smallest possible amount of electromagnetic energy of a particular wavelength. Photons are units of energy rather than matter, so they have no mass. The energy of a photon determines the frequency (and wavelength) of light that is associated with it. The greater the energy of the photon, the greater the frequency and vice versa.

Electromagnetic radiation is a part of our lives in many ways. Different frequencies of electromagnetic radiation have different propagation characteristics. These characteristics make different frequencies of electromagnetic radiation useful for different types of remote sensing.

- Radio waves (100,000 km - 1,000 nanometers) are used for communication through radio/TV broadcast, cellphone signals, GPS signals, signals sent through communications satellites, etc. Different frequencies of radio waves pass through or are reflected from different types of materials.

- Infrared radiation (1,000 - 700 nanometers at a frequency just below the red visible light we can see) is associated with heat and can often be used in situations where there is little visible light (night-vision). Photosynthetic plants reflect infrared radiation (to avoid overheating), so infrared radiation can be used in conjunction with visible light to detect vegetation

- Visible light (380 - 700 nanometers) is a form of electromagnetic radiation that we can see and which we can create with light bulbs. It is useful for capturing the way we see the world from above. However, visible light does not travel through objects or thick gases like clouds.

- X-rays (0.01 nm - 10 nm) pass through soft human tissue and are used for medical diagnosis

Electromagnetic radiation is different from particle radiation, which results from subatomic particles being thrown off by nuclear reactions, and is associated with radioactive materials like uranium and nuclear power plants. Particle radiation is often associated with electromagnetic radiation, but the primary health concern with any kind of radiation is ionization, which occurs when radiation pushes electrons out of atoms and leaves them as ions with a positive charge. With living cells, this ionization damages the cell DNA and can lead to cell death or mutations and cancer.

Bands

While early satellites captured only panchromatic (grayscale) visible light, contemporary satellites often have sensors that capture different ranges of frequencies or bands of electromagnetic radiation.

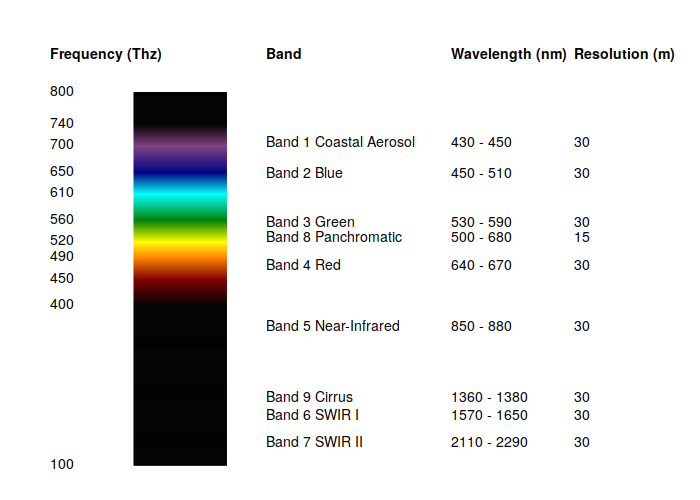

Satellites capture ranges of frequencies in bands. The spectral resolution of a satellite sensor is usually specified by the numbers of different bands available and the ranges of frequencies covered by each band. The appropriate spectral resolution depends on the purpose of the satellite.

For space imagery we are usually most interested in the red (430-480 THz), green (540-580 THz), and blue (610-670 THz) bands that the three different types of cone cells in our eye retinas can detect as visible light colors.

The diagram below shows the relationship of Landsat bands 1 - 9 to the visible light spectrum. Bands 10 and 11 captured by the Thermal Infrared Sensor (TIRS) are much lower frequencies and are not shown.

Analysis with Raster Data

While aerial and space-based imagery can have value in and of itself for visual interpretation, there are a variety of technical methods for extracting useful information from raster data.

NDVI

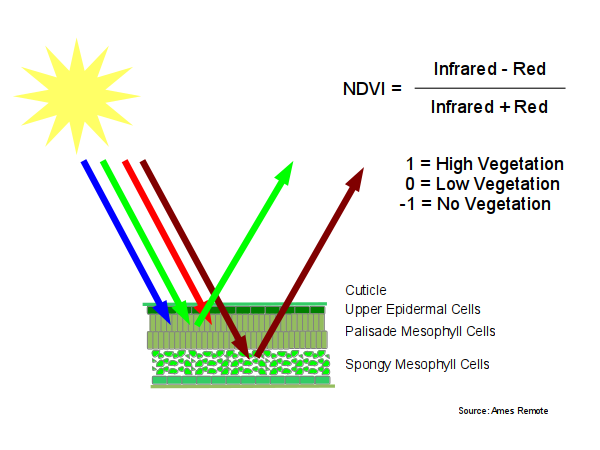

Different bands of electromagnetic radiation are useful for analyzing a different types of phenomena. For example, a combination of red and near-infrared bands called normalized difference vegetation index (NDVI) can be used to determine levels of vegetation in a particular area.

NDVI is especially useful for biologists and biogeographers involved in the study of phenology, which deals with the relations between climate and seasonal biological phenomena. In agriculture, NDVI can be used to identify areas of a farm or field that are not growing well.

NDVI is based on a characteristic that photosynthetic green plants tend to reflect infrared light to avoid overheating and reflect green light (which is why they appear green to our eyes), but absorb red light to power the process of photosynthesis.

This phenomena can be used with the Landsat 9 near infrared

band (band 5) and the red band (band 4) to calculate

an index that is highest in areas with large amounts

of vegetation, and lower in areas of low vegetation.

The range of the index is negative one to positive one.

When near infrared is high and red is low, that is when living photosynthetic plants are reflecting infrared and absorbing red, making NDVI high and closer to one.

1 - 0

------- = 1

1 + 0

When near infrared is low and red is high, such as with bare ground or water, NDVI is low and closer to negative one.

0 - 1

------- = -1

0 + 1

The normalization specified in this formula provides NDVI values that are useful regardless of variations in the level of lighting or the angle of view. It also makes it possible to distinguish between photosynthetic vegetation and non-photosynthetic green areas (like artificial turf). Accordingly, this gives NDVI an advantage over simply looking at an RGB image for areas that appear green.

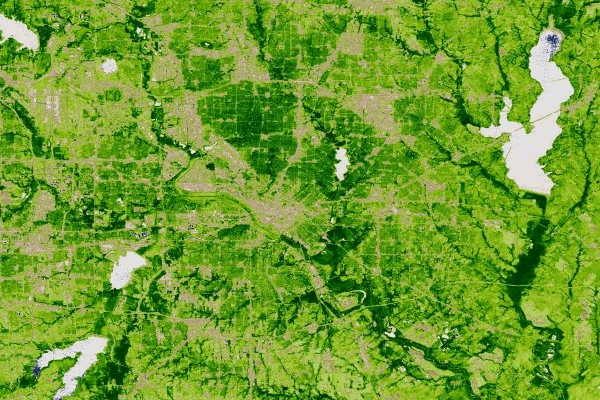

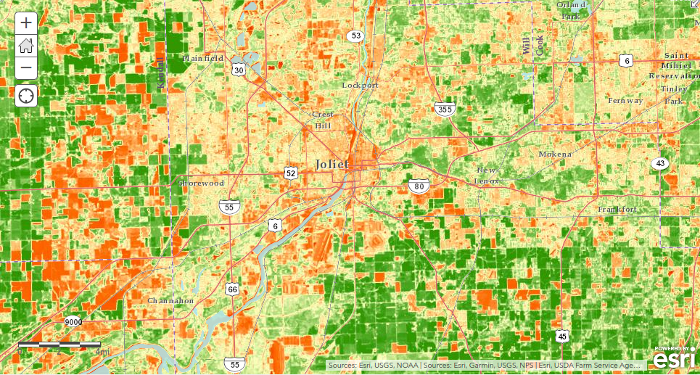

Because NDVI creates a set of values from -1 to +1 and has no color of its own, maps of NDVI are commonly visualized with false-color that assigns different colors to ranges of values and makes areas with high and low NDVI easier to distinguish. In the example below of the area around Joliet, IL, colors range from red (low NDVI = urban areas or fallow fields) to green (high NDVI = trees or growing agricultural fields).

Classification

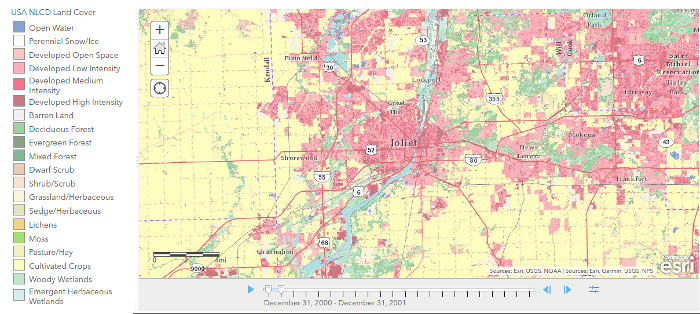

Classification of raster data is the process of grouping pixels of data into specific categories or classes. One common application of classification is land use or land cover classification that determines how specific areas of land are being used (cropland, forest, residential, manufacturing, etc.) Such data can be used by governments for taxation or urban planning, or by researchers to analyze environmental changes that result from human activities (such as urban sprawl or deforestation).

One notable example in the US is the National Land Cover Database, which is maintained by a consortium of federal agencies and based on Landsat satellite imagery and other supplementary datasets.

There are three primary techniques for image classification (GISGeography 2021):

- Unsupervised image classification involves using software to find

clusters of similar values in the image. Those clusters are then interpreted

to figure out what they represent and assign descriptions. Common unsupervised

classification algorithms include:

- K-means

- ISODATA

- Supervised image classification involves the initial manual

selection of areas with known characteristics that are then used to

train a machine-learning software to classify the remaining areas of the image.

Common supervised image classification techniques include:

- Maximum likelihood

- Minimum-distance

- Principal component analysis

- Support vector machine (SVM)

- Iso cluster

- Object-based image analysis is an extension of supervised image

classification that involves the aggregation of similarly classed pixels

into vector polygons. This technique can be used to begin the process of

digitizing vector polygon features (like buildings or roads) based on aerial or

satellite imagery, although the digitized features usually need additional

manual clean-up later. Common object-based image analysis implementation include:

- Multi-resolution segmentation in eCognition

- Segment mean shift in ArcGIS

Ground Control and Downlinks

As with GPS, satellites used for remote sensing are controlled through a mission operations center (MOC). All contemporary satellite data is returned to earth with radio signals through downlink stations that then relay that data to the MOC for processing, storage and communication. The photo below is of a particularly remote downlink station in the Arctic used by the Landsat system.

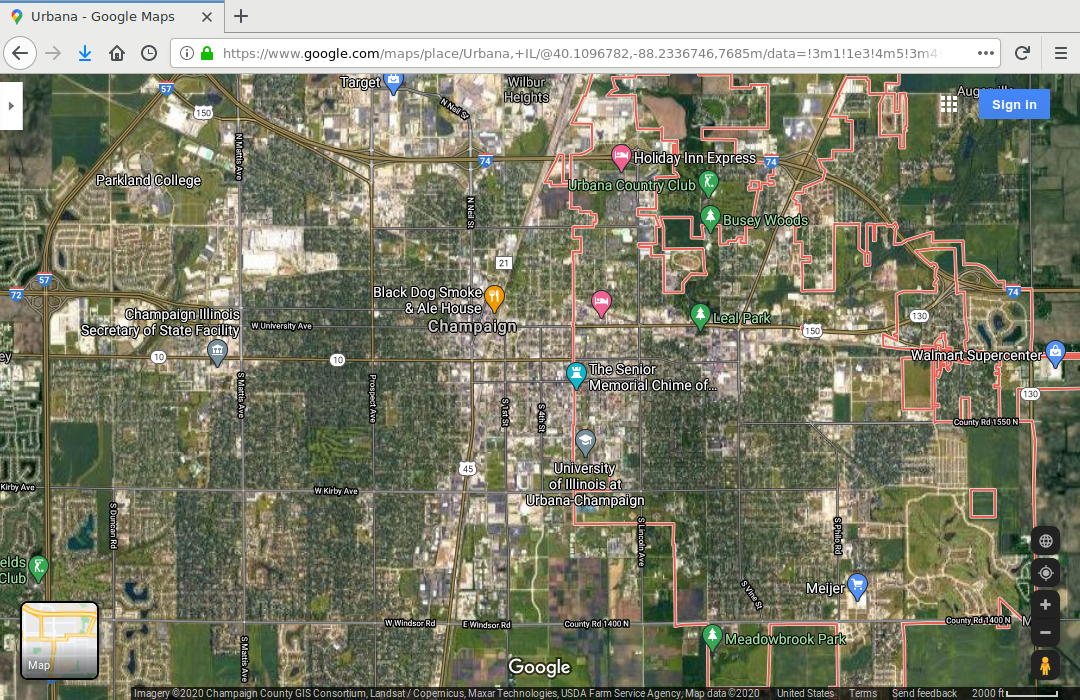

Google Maps / Earth

Despite the name, when using satellite view in Google Maps or Google Earth, what you actually seeing is data from a variety of providers (both public and private) and a variety of platforms (satellites and airplanes) that has been compiled into a seamless whole by Google. Single scenes can actually be mosaics of data from multiple sources (Google 2020). Indeed, rather than giving a purely faithful picture of what you would see from space, this is an artistic representation of the Earth that is used to effectively communicate what is where so that users can interpret that data more clearly and act accordingly.

The original provider and date of the data being viewed depends on the area being viewed as well as the zoom level (how close or far away you are from the ground). The providers (s) and date of data capture for a particular location and zoom level and is visible in Google Maps along the bottom of the window.

Threats to Satellite Systems

There are around 1,100 active satellites plus another 2,600 or so that have been decommissioned. This does not include as many as 500,000 pieces of "space junk" the size of a marble or larger that NASA tracks to help safeguard space operations. While most space junk will eventually return to earth, the constant addition of new satellites and launch stages, and the occasional collision of satellites (turning two satellites into multiple pieces of junk) means that the threat to space operations by space junk is increasing and irreversible.

As the list of space-faring nations grows, terrestrial conflicts could extend to actions against spaceborne systems, making military and civilian geospatial technology highly vulnerable to disruption or destruction by state and non-state actors.

All satellite systems are expensive to build, launch, maintain, and renew. As such, they are dependent upon political and economic support that is tenuous in the contemporary American political environment.